#include "Target/AArch64/AArch64ISelLowering.h"

Public Member Functions | |

| AArch64TargetLowering (const TargetMachine &TM, const AArch64Subtarget &STI) | |

| CCAssignFn * | CCAssignFnForCall (CallingConv::ID CC, bool IsVarArg) const |

| Selects the correct CCAssignFn for a given CallingConvention value. More... | |

| CCAssignFn * | CCAssignFnForReturn (CallingConv::ID CC) const |

| Selects the correct CCAssignFn for a given CallingConvention value. More... | |

| void | computeKnownBitsForTargetNode (const SDValue Op, KnownBits &Known, const APInt &DemandedElts, const SelectionDAG &DAG, unsigned Depth=0) const override |

| Determine which of the bits specified in Mask are known to be either zero or one and return them in the KnownZero/KnownOne bitsets. More... | |

| bool | targetShrinkDemandedConstant (SDValue Op, const APInt &Demanded, TargetLoweringOpt &TLO) const override |

| MVT | getScalarShiftAmountTy (const DataLayout &DL, EVT) const override |

| EVT is not used in-tree, but is used by out-of-tree target. More... | |

| bool | allowsMisalignedMemoryAccesses (EVT VT, unsigned AddrSpace=0, unsigned Align=1, bool *Fast=nullptr) const override |

| Returns true if the target allows unaligned memory accesses of the specified type. More... | |

| SDValue | LowerOperation (SDValue Op, SelectionDAG &DAG) const override |

| Provide custom lowering hooks for some operations. More... | |

| const char * | getTargetNodeName (unsigned Opcode) const override |

| This method returns the name of a target specific DAG node. More... | |

| SDValue | PerformDAGCombine (SDNode *N, DAGCombinerInfo &DCI) const override |

| This method will be invoked for all target nodes and for any target-independent nodes that the target has registered with invoke it for. More... | |

| bool | isNoopAddrSpaceCast (unsigned SrcAS, unsigned DestAS) const override |

| Returns true if a cast between SrcAS and DestAS is a noop. More... | |

| FastISel * | createFastISel (FunctionLoweringInfo &funcInfo, const TargetLibraryInfo *libInfo) const override |

| This method returns a target specific FastISel object, or null if the target does not support "fast" ISel. More... | |

| bool | isOffsetFoldingLegal (const GlobalAddressSDNode *GA) const override |

| Return true if folding a constant offset with the given GlobalAddress is legal. More... | |

| bool | isFPImmLegal (const APFloat &Imm, EVT VT) const override |

| Returns true if the target can instruction select the specified FP immediate natively. More... | |

| bool | isShuffleMaskLegal (ArrayRef< int > M, EVT VT) const override |

| Return true if the given shuffle mask can be codegen'd directly, or if it should be stack expanded. More... | |

| EVT | getSetCCResultType (const DataLayout &DL, LLVMContext &Context, EVT VT) const override |

| Return the ISD::SETCC ValueType. More... | |

| SDValue | ReconstructShuffle (SDValue Op, SelectionDAG &DAG) const |

| MachineBasicBlock * | EmitF128CSEL (MachineInstr &MI, MachineBasicBlock *BB) const |

| MachineBasicBlock * | EmitLoweredCatchRet (MachineInstr &MI, MachineBasicBlock *BB) const |

| MachineBasicBlock * | EmitLoweredCatchPad (MachineInstr &MI, MachineBasicBlock *BB) const |

| MachineBasicBlock * | EmitInstrWithCustomInserter (MachineInstr &MI, MachineBasicBlock *MBB) const override |

| This method should be implemented by targets that mark instructions with the 'usesCustomInserter' flag. More... | |

| bool | getTgtMemIntrinsic (IntrinsicInfo &Info, const CallInst &I, MachineFunction &MF, unsigned Intrinsic) const override |

| getTgtMemIntrinsic - Represent NEON load and store intrinsics as MemIntrinsicNodes. More... | |

| bool | shouldReduceLoadWidth (SDNode *Load, ISD::LoadExtType ExtTy, EVT NewVT) const override |

| Return true if it is profitable to reduce a load to a smaller type. More... | |

| bool | isTruncateFree (Type *Ty1, Type *Ty2) const override |

| Return true if it's free to truncate a value of type FromTy to type ToTy. More... | |

| bool | isTruncateFree (EVT VT1, EVT VT2) const override |

| bool | isProfitableToHoist (Instruction *I) const override |

| Check if it is profitable to hoist instruction in then/else to if. More... | |

| bool | isZExtFree (Type *Ty1, Type *Ty2) const override |

| Return true if any actual instruction that defines a value of type FromTy implicitly zero-extends the value to ToTy in the result register. More... | |

| bool | isZExtFree (EVT VT1, EVT VT2) const override |

| bool | isZExtFree (SDValue Val, EVT VT2) const override |

| Return true if zero-extending the specific node Val to type VT2 is free (either because it's implicitly zero-extended such as ARM ldrb / ldrh or because it's folded such as X86 zero-extending loads). More... | |

| bool | hasPairedLoad (EVT LoadedType, unsigned &RequiredAligment) const override |

| Return true if the target supplies and combines to a paired load two loaded values of type LoadedType next to each other in memory. More... | |

| unsigned | getMaxSupportedInterleaveFactor () const override |

| Get the maximum supported factor for interleaved memory accesses. More... | |

| bool | lowerInterleavedLoad (LoadInst *LI, ArrayRef< ShuffleVectorInst *> Shuffles, ArrayRef< unsigned > Indices, unsigned Factor) const override |

| Lower an interleaved load into a ldN intrinsic. More... | |

| bool | lowerInterleavedStore (StoreInst *SI, ShuffleVectorInst *SVI, unsigned Factor) const override |

| Lower an interleaved store into a stN intrinsic. More... | |

| bool | isLegalAddImmediate (int64_t) const override |

| Return true if the specified immediate is legal add immediate, that is the target has add instructions which can add a register with the immediate without having to materialize the immediate into a register. More... | |

| bool | isLegalICmpImmediate (int64_t) const override |

| Return true if the specified immediate is legal icmp immediate, that is the target has icmp instructions which can compare a register against the immediate without having to materialize the immediate into a register. More... | |

| bool | shouldConsiderGEPOffsetSplit () const override |

| EVT | getOptimalMemOpType (uint64_t Size, unsigned DstAlign, unsigned SrcAlign, bool IsMemset, bool ZeroMemset, bool MemcpyStrSrc, MachineFunction &MF) const override |

| Returns the target specific optimal type for load and store operations as a result of memset, memcpy, and memmove lowering. More... | |

| bool | isLegalAddressingMode (const DataLayout &DL, const AddrMode &AM, Type *Ty, unsigned AS, Instruction *I=nullptr) const override |

| Return true if the addressing mode represented by AM is legal for this target, for a load/store of the specified type. More... | |

| int | getScalingFactorCost (const DataLayout &DL, const AddrMode &AM, Type *Ty, unsigned AS) const override |

| Return the cost of the scaling factor used in the addressing mode represented by AM for this target, for a load/store of the specified type. More... | |

| bool | isFMAFasterThanFMulAndFAdd (EVT VT) const override |

| Return true if an FMA operation is faster than a pair of fmul and fadd instructions. More... | |

| const MCPhysReg * | getScratchRegisters (CallingConv::ID CC) const override |

| Returns a 0 terminated array of registers that can be safely used as scratch registers. More... | |

| bool | isDesirableToCommuteWithShift (const SDNode *N, CombineLevel Level) const override |

| Returns false if N is a bit extraction pattern of (X >> C) & Mask. More... | |

| bool | shouldConvertConstantLoadToIntImm (const APInt &Imm, Type *Ty) const override |

| Returns true if it is beneficial to convert a load of a constant to just the constant itself. More... | |

| bool | isExtractSubvectorCheap (EVT ResVT, EVT SrcVT, unsigned Index) const override |

| Return true if EXTRACT_SUBVECTOR is cheap for this result type with this index. More... | |

| Value * | emitLoadLinked (IRBuilder<> &Builder, Value *Addr, AtomicOrdering Ord) const override |

| Perform a load-linked operation on Addr, returning a "Value *" with the corresponding pointee type. More... | |

| Value * | emitStoreConditional (IRBuilder<> &Builder, Value *Val, Value *Addr, AtomicOrdering Ord) const override |

| Perform a store-conditional operation to Addr. More... | |

| void | emitAtomicCmpXchgNoStoreLLBalance (IRBuilder<> &Builder) const override |

| TargetLoweringBase::AtomicExpansionKind | shouldExpandAtomicLoadInIR (LoadInst *LI) const override |

| Returns how the given (atomic) load should be expanded by the IR-level AtomicExpand pass. More... | |

| bool | shouldExpandAtomicStoreInIR (StoreInst *SI) const override |

| Returns true if the given (atomic) store should be expanded by the IR-level AtomicExpand pass into an "atomic xchg" which ignores its input. More... | |

| TargetLoweringBase::AtomicExpansionKind | shouldExpandAtomicRMWInIR (AtomicRMWInst *AI) const override |

| Returns how the IR-level AtomicExpand pass should expand the given AtomicRMW, if at all. More... | |

| TargetLoweringBase::AtomicExpansionKind | shouldExpandAtomicCmpXchgInIR (AtomicCmpXchgInst *AI) const override |

| Returns how the given atomic cmpxchg should be expanded by the IR-level AtomicExpand pass. More... | |

| bool | useLoadStackGuardNode () const override |

| If this function returns true, SelectionDAGBuilder emits a LOAD_STACK_GUARD node when it is lowering Intrinsic::stackprotector. More... | |

| TargetLoweringBase::LegalizeTypeAction | getPreferredVectorAction (MVT VT) const override |

| Return the preferred vector type legalization action. More... | |

| Value * | getIRStackGuard (IRBuilder<> &IRB) const override |

| If the target has a standard location for the stack protector cookie, returns the address of that location. More... | |

| void | insertSSPDeclarations (Module &M) const override |

| Inserts necessary declarations for SSP (stack protection) purpose. More... | |

| Value * | getSDagStackGuard (const Module &M) const override |

| Return the variable that's previously inserted by insertSSPDeclarations, if any, otherwise return nullptr. More... | |

| Value * | getSSPStackGuardCheck (const Module &M) const override |

| If the target has a standard stack protection check function that performs validation and error handling, returns the function. More... | |

| Value * | getSafeStackPointerLocation (IRBuilder<> &IRB) const override |

| If the target has a standard location for the unsafe stack pointer, returns the address of that location. More... | |

| unsigned | getExceptionPointerRegister (const Constant *PersonalityFn) const override |

| If a physical register, this returns the register that receives the exception address on entry to an EH pad. More... | |

| unsigned | getExceptionSelectorRegister (const Constant *PersonalityFn) const override |

| If a physical register, this returns the register that receives the exception typeid on entry to a landing pad. More... | |

| bool | isIntDivCheap (EVT VT, AttributeList Attr) const override |

| Return true if integer divide is usually cheaper than a sequence of several shifts, adds, and multiplies for this target. More... | |

| bool | canMergeStoresTo (unsigned AddressSpace, EVT MemVT, const SelectionDAG &DAG) const override |

| Returns if it's reasonable to merge stores to MemVT size. More... | |

| bool | isCheapToSpeculateCttz () const override |

| Return true if it is cheap to speculate a call to intrinsic cttz. More... | |

| bool | isCheapToSpeculateCtlz () const override |

| Return true if it is cheap to speculate a call to intrinsic ctlz. More... | |

| bool | isMaskAndCmp0FoldingBeneficial (const Instruction &AndI) const override |

| Return if the target supports combining a chain like: More... | |

| bool | hasAndNotCompare (SDValue V) const override |

| Return true if the target should transform: (X & Y) == Y —> (~X & Y) == 0 (X & Y) != Y —> (~X & Y) != 0. More... | |

| bool | hasAndNot (SDValue Y) const override |

| Return true if the target has a bitwise and-not operation: X = ~A & B This can be used to simplify select or other instructions. More... | |

| bool | shouldTransformSignedTruncationCheck (EVT XVT, unsigned KeptBits) const override |

| Should we tranform the IR-optimal check for whether given truncation down into KeptBits would be truncating or not: (add x, (1 << (KeptBits-1))) srccond (1 << KeptBits) Into it's more traditional form: ((x << C) a>> C) dstcond x Return true if we should transform. More... | |

| bool | hasBitPreservingFPLogic (EVT VT) const override |

| Return true if it is safe to transform an integer-domain bitwise operation into the equivalent floating-point operation. More... | |

| bool | supportSplitCSR (MachineFunction *MF) const override |

| Return true if the target supports that a subset of CSRs for the given machine function is handled explicitly via copies. More... | |

| void | initializeSplitCSR (MachineBasicBlock *Entry) const override |

| Perform necessary initialization to handle a subset of CSRs explicitly via copies. More... | |

| void | insertCopiesSplitCSR (MachineBasicBlock *Entry, const SmallVectorImpl< MachineBasicBlock *> &Exits) const override |

| Insert explicit copies in entry and exit blocks. More... | |

| bool | supportSwiftError () const override |

| Return true if the target supports swifterror attribute. More... | |

| bool | enableAggressiveFMAFusion (EVT VT) const override |

| Enable aggressive FMA fusion on targets that want it. More... | |

| unsigned | getVaListSizeInBits (const DataLayout &DL) const override |

| Returns the size of the platform's va_list object. More... | |

| bool | isLegalInterleavedAccessType (VectorType *VecTy, const DataLayout &DL) const |

Returns true if VecTy is a legal interleaved access type. More... | |

| unsigned | getNumInterleavedAccesses (VectorType *VecTy, const DataLayout &DL) const |

| Returns the number of interleaved accesses that will be generated when lowering accesses of the given type. More... | |

| MachineMemOperand::Flags | getMMOFlags (const Instruction &I) const override |

| This callback is used to inspect load/store instructions and add target-specific MachineMemOperand flags to them. More... | |

| bool | functionArgumentNeedsConsecutiveRegisters (Type *Ty, CallingConv::ID CallConv, bool isVarArg) const override |

| For some targets, an LLVM struct type must be broken down into multiple simple types, but the calling convention specifies that the entire struct must be passed in a block of consecutive registers. More... | |

| bool | needsFixedCatchObjects () const override |

| Used for exception handling on Win64. More... | |

Public Member Functions inherited from llvm::TargetLowering Public Member Functions inherited from llvm::TargetLowering | |

| TargetLowering (const TargetLowering &)=delete | |

| TargetLowering & | operator= (const TargetLowering &)=delete |

| TargetLowering (const TargetMachine &TM) | |

| NOTE: The TargetMachine owns TLOF. More... | |

| bool | isPositionIndependent () const |

| virtual bool | isSDNodeSourceOfDivergence (const SDNode *N, FunctionLoweringInfo *FLI, LegacyDivergenceAnalysis *DA) const |

| virtual bool | isSDNodeAlwaysUniform (const SDNode *N) const |

| virtual unsigned | getJumpTableEncoding () const |

| Return the entry encoding for a jump table in the current function. More... | |

| virtual const MCExpr * | LowerCustomJumpTableEntry (const MachineJumpTableInfo *, const MachineBasicBlock *, unsigned, MCContext &) const |

| virtual SDValue | getPICJumpTableRelocBase (SDValue Table, SelectionDAG &DAG) const |

| Returns relocation base for the given PIC jumptable. More... | |

| virtual const MCExpr * | getPICJumpTableRelocBaseExpr (const MachineFunction *MF, unsigned JTI, MCContext &Ctx) const |

| This returns the relocation base for the given PIC jumptable, the same as getPICJumpTableRelocBase, but as an MCExpr. More... | |

| bool | isInTailCallPosition (SelectionDAG &DAG, SDNode *Node, SDValue &Chain) const |

| Check whether a given call node is in tail position within its function. More... | |

| void | softenSetCCOperands (SelectionDAG &DAG, EVT VT, SDValue &NewLHS, SDValue &NewRHS, ISD::CondCode &CCCode, const SDLoc &DL) const |

| Soften the operands of a comparison. More... | |

| std::pair< SDValue, SDValue > | makeLibCall (SelectionDAG &DAG, RTLIB::Libcall LC, EVT RetVT, ArrayRef< SDValue > Ops, bool isSigned, const SDLoc &dl, bool doesNotReturn=false, bool isReturnValueUsed=true) const |

| Returns a pair of (return value, chain). More... | |

| bool | parametersInCSRMatch (const MachineRegisterInfo &MRI, const uint32_t *CallerPreservedMask, const SmallVectorImpl< CCValAssign > &ArgLocs, const SmallVectorImpl< SDValue > &OutVals) const |

| Check whether parameters to a call that are passed in callee saved registers are the same as from the calling function. More... | |

| bool | ShrinkDemandedConstant (SDValue Op, const APInt &Demanded, TargetLoweringOpt &TLO) const |

| Check to see if the specified operand of the specified instruction is a constant integer. More... | |

| bool | ShrinkDemandedOp (SDValue Op, unsigned BitWidth, const APInt &Demanded, TargetLoweringOpt &TLO) const |

| Convert x+y to (VT)((SmallVT)x+(SmallVT)y) if the casts are free. More... | |

| bool | SimplifyDemandedBits (SDValue Op, const APInt &DemandedBits, const APInt &DemandedElts, KnownBits &Known, TargetLoweringOpt &TLO, unsigned Depth=0, bool AssumeSingleUse=false) const |

| Look at Op. More... | |

| bool | SimplifyDemandedBits (SDValue Op, const APInt &DemandedBits, KnownBits &Known, TargetLoweringOpt &TLO, unsigned Depth=0, bool AssumeSingleUse=false) const |

| Helper wrapper around SimplifyDemandedBits, demanding all elements. More... | |

| bool | SimplifyDemandedBits (SDValue Op, const APInt &DemandedMask, DAGCombinerInfo &DCI) const |

| Helper wrapper around SimplifyDemandedBits. More... | |

| bool | SimplifyDemandedVectorElts (SDValue Op, const APInt &DemandedEltMask, APInt &KnownUndef, APInt &KnownZero, TargetLoweringOpt &TLO, unsigned Depth=0, bool AssumeSingleUse=false) const |

| Look at Vector Op. More... | |

| bool | SimplifyDemandedVectorElts (SDValue Op, const APInt &DemandedElts, APInt &KnownUndef, APInt &KnownZero, DAGCombinerInfo &DCI) const |

| Helper wrapper around SimplifyDemandedVectorElts. More... | |

| virtual void | computeKnownBitsForFrameIndex (const SDValue FIOp, KnownBits &Known, const APInt &DemandedElts, const SelectionDAG &DAG, unsigned Depth=0) const |

Determine which of the bits of FrameIndex FIOp are known to be 0. More... | |

| virtual unsigned | ComputeNumSignBitsForTargetNode (SDValue Op, const APInt &DemandedElts, const SelectionDAG &DAG, unsigned Depth=0) const |

| This method can be implemented by targets that want to expose additional information about sign bits to the DAG Combiner. More... | |

| virtual bool | SimplifyDemandedVectorEltsForTargetNode (SDValue Op, const APInt &DemandedElts, APInt &KnownUndef, APInt &KnownZero, TargetLoweringOpt &TLO, unsigned Depth=0) const |

| Attempt to simplify any target nodes based on the demanded vector elements, returning true on success. More... | |

| virtual bool | SimplifyDemandedBitsForTargetNode (SDValue Op, const APInt &DemandedBits, const APInt &DemandedElts, KnownBits &Known, TargetLoweringOpt &TLO, unsigned Depth=0) const |

| Attempt to simplify any target nodes based on the demanded bits/elts, returning true on success. More... | |

| virtual bool | isKnownNeverNaNForTargetNode (SDValue Op, const SelectionDAG &DAG, bool SNaN=false, unsigned Depth=0) const |

If SNaN is false,. More... | |

| bool | isConstTrueVal (const SDNode *N) const |

| Return if the N is a constant or constant vector equal to the true value from getBooleanContents(). More... | |

| bool | isConstFalseVal (const SDNode *N) const |

| Return if the N is a constant or constant vector equal to the false value from getBooleanContents(). More... | |

| bool | isExtendedTrueVal (const ConstantSDNode *N, EVT VT, bool SExt) const |

Return if N is a True value when extended to VT. More... | |

| SDValue | SimplifySetCC (EVT VT, SDValue N0, SDValue N1, ISD::CondCode Cond, bool foldBooleans, DAGCombinerInfo &DCI, const SDLoc &dl) const |

| Try to simplify a setcc built with the specified operands and cc. More... | |

| virtual SDValue | unwrapAddress (SDValue N) const |

| virtual bool | isGAPlusOffset (SDNode *N, const GlobalValue *&GA, int64_t &Offset) const |

| Returns true (and the GlobalValue and the offset) if the node is a GlobalAddress + offset. More... | |

| virtual bool | shouldFoldShiftPairToMask (const SDNode *N, CombineLevel Level) const |

| Return true if it is profitable to fold a pair of shifts into a mask. More... | |

| virtual bool | isDesirableToCombineBuildVectorToShuffleTruncate (ArrayRef< int > ShuffleMask, EVT SrcVT, EVT TruncVT) const |

| virtual bool | isTypeDesirableForOp (unsigned, EVT VT) const |

| Return true if the target has native support for the specified value type and it is 'desirable' to use the type for the given node type. More... | |

| virtual bool | isDesirableToTransformToIntegerOp (unsigned, EVT) const |

| Return true if it is profitable for dag combiner to transform a floating point op of specified opcode to a equivalent op of an integer type. More... | |

| virtual bool | IsDesirableToPromoteOp (SDValue, EVT &) const |

| This method query the target whether it is beneficial for dag combiner to promote the specified node. More... | |

| std::pair< SDValue, SDValue > | LowerCallTo (CallLoweringInfo &CLI) const |

| This function lowers an abstract call to a function into an actual call. More... | |

| virtual void | HandleByVal (CCState *, unsigned &, unsigned) const |

| Target-specific cleanup for formal ByVal parameters. More... | |

| virtual const char * | getClearCacheBuiltinName () const |

| Return the builtin name for the __builtin___clear_cache intrinsic Default is to invoke the clear cache library call. More... | |

| virtual EVT | getTypeForExtReturn (LLVMContext &Context, EVT VT, ISD::NodeType) const |

| Return the type that should be used to zero or sign extend a zeroext/signext integer return value. More... | |

| virtual SDValue | prepareVolatileOrAtomicLoad (SDValue Chain, const SDLoc &DL, SelectionDAG &DAG) const |

| This callback is used to prepare for a volatile or atomic load. More... | |

| virtual void | LowerOperationWrapper (SDNode *N, SmallVectorImpl< SDValue > &Results, SelectionDAG &DAG) const |

| This callback is invoked by the type legalizer to legalize nodes with an illegal operand type but legal result types. More... | |

| bool | verifyReturnAddressArgumentIsConstant (SDValue Op, SelectionDAG &DAG) const |

| virtual bool | ExpandInlineAsm (CallInst *) const |

| This hook allows the target to expand an inline asm call to be explicit llvm code if it wants to. More... | |

| virtual AsmOperandInfoVector | ParseConstraints (const DataLayout &DL, const TargetRegisterInfo *TRI, ImmutableCallSite CS) const |

| Split up the constraint string from the inline assembly value into the specific constraints and their prefixes, and also tie in the associated operand values. More... | |

| virtual ConstraintWeight | getMultipleConstraintMatchWeight (AsmOperandInfo &info, int maIndex) const |

| Examine constraint type and operand type and determine a weight value. More... | |

| virtual void | ComputeConstraintToUse (AsmOperandInfo &OpInfo, SDValue Op, SelectionDAG *DAG=nullptr) const |

| Determines the constraint code and constraint type to use for the specific AsmOperandInfo, setting OpInfo.ConstraintCode and OpInfo.ConstraintType. More... | |

| SDValue | BuildSDIV (SDNode *N, SelectionDAG &DAG, bool IsAfterLegalization, SmallVectorImpl< SDNode *> &Created) const |

| Given an ISD::SDIV node expressing a divide by constant, return a DAG expression to select that will generate the same value by multiplying by a magic number. More... | |

| SDValue | BuildUDIV (SDNode *N, SelectionDAG &DAG, bool IsAfterLegalization, SmallVectorImpl< SDNode *> &Created) const |

| Given an ISD::UDIV node expressing a divide by constant, return a DAG expression to select that will generate the same value by multiplying by a magic number. More... | |

| bool | expandMUL_LOHI (unsigned Opcode, EVT VT, SDLoc dl, SDValue LHS, SDValue RHS, SmallVectorImpl< SDValue > &Result, EVT HiLoVT, SelectionDAG &DAG, MulExpansionKind Kind, SDValue LL=SDValue(), SDValue LH=SDValue(), SDValue RL=SDValue(), SDValue RH=SDValue()) const |

| Expand a MUL or [US]MUL_LOHI of n-bit values into two or four nodes, respectively, each computing an n/2-bit part of the result. More... | |

| bool | expandMUL (SDNode *N, SDValue &Lo, SDValue &Hi, EVT HiLoVT, SelectionDAG &DAG, MulExpansionKind Kind, SDValue LL=SDValue(), SDValue LH=SDValue(), SDValue RL=SDValue(), SDValue RH=SDValue()) const |

| Expand a MUL into two nodes. More... | |

| bool | expandFunnelShift (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand funnel shift. More... | |

| bool | expandROT (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand rotations. More... | |

| bool | expandFP_TO_SINT (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand float(f32) to SINT(i64) conversion. More... | |

| bool | expandFP_TO_UINT (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand float to UINT conversion. More... | |

| bool | expandUINT_TO_FP (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand UINT(i64) to double(f64) conversion. More... | |

| SDValue | expandFMINNUM_FMAXNUM (SDNode *N, SelectionDAG &DAG) const |

| Expand fminnum/fmaxnum into fminnum_ieee/fmaxnum_ieee with quieted inputs. More... | |

| bool | expandCTPOP (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand CTPOP nodes. More... | |

| bool | expandCTLZ (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand CTLZ/CTLZ_ZERO_UNDEF nodes. More... | |

| bool | expandCTTZ (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand CTTZ/CTTZ_ZERO_UNDEF nodes. More... | |

| bool | expandABS (SDNode *N, SDValue &Result, SelectionDAG &DAG) const |

| Expand ABS nodes. More... | |

| SDValue | scalarizeVectorLoad (LoadSDNode *LD, SelectionDAG &DAG) const |

| Turn load of vector type into a load of the individual elements. More... | |

| SDValue | scalarizeVectorStore (StoreSDNode *ST, SelectionDAG &DAG) const |

| std::pair< SDValue, SDValue > | expandUnalignedLoad (LoadSDNode *LD, SelectionDAG &DAG) const |

| Expands an unaligned load to 2 half-size loads for an integer, and possibly more for vectors. More... | |

| SDValue | expandUnalignedStore (StoreSDNode *ST, SelectionDAG &DAG) const |

| Expands an unaligned store to 2 half-size stores for integer values, and possibly more for vectors. More... | |

| SDValue | IncrementMemoryAddress (SDValue Addr, SDValue Mask, const SDLoc &DL, EVT DataVT, SelectionDAG &DAG, bool IsCompressedMemory) const |

Increments memory address Addr according to the type of the value DataVT that should be stored. More... | |

| SDValue | getVectorElementPointer (SelectionDAG &DAG, SDValue VecPtr, EVT VecVT, SDValue Index) const |

Get a pointer to vector element Idx located in memory for a vector of type VecVT starting at a base address of VecPtr. More... | |

| SDValue | expandAddSubSat (SDNode *Node, SelectionDAG &DAG) const |

| Method for building the DAG expansion of ISD::[US][ADD|SUB]SAT. More... | |

| SDValue | getExpandedFixedPointMultiplication (SDNode *Node, SelectionDAG &DAG) const |

| Method for building the DAG expansion of ISD::SMULFIX. More... | |

| virtual void | AdjustInstrPostInstrSelection (MachineInstr &MI, SDNode *Node) const |

| This method should be implemented by targets that mark instructions with the 'hasPostISelHook' flag. More... | |

| virtual SDValue | emitStackGuardXorFP (SelectionDAG &DAG, SDValue Val, const SDLoc &DL) const |

| virtual SDValue | LowerToTLSEmulatedModel (const GlobalAddressSDNode *GA, SelectionDAG &DAG) const |

| Lower TLS global address SDNode for target independent emulated TLS model. More... | |

| virtual SDValue | expandIndirectJTBranch (const SDLoc &dl, SDValue Value, SDValue Addr, SelectionDAG &DAG) const |

| Expands target specific indirect branch for the case of JumpTable expanasion. More... | |

| SDValue | lowerCmpEqZeroToCtlzSrl (SDValue Op, SelectionDAG &DAG) const |

Public Member Functions inherited from llvm::TargetLoweringBase Public Member Functions inherited from llvm::TargetLoweringBase | |

| virtual void | markLibCallAttributes (MachineFunction *MF, unsigned CC, ArgListTy &Args) const |

| TargetLoweringBase (const TargetMachine &TM) | |

| NOTE: The TargetMachine owns TLOF. More... | |

| TargetLoweringBase (const TargetLoweringBase &)=delete | |

| TargetLoweringBase & | operator= (const TargetLoweringBase &)=delete |

| virtual | ~TargetLoweringBase ()=default |

| const TargetMachine & | getTargetMachine () const |

| virtual bool | useSoftFloat () const |

| MVT | getPointerTy (const DataLayout &DL, uint32_t AS=0) const |

| Return the pointer type for the given address space, defaults to the pointer type from the data layout. More... | |

| MVT | getFrameIndexTy (const DataLayout &DL) const |

| Return the type for frame index, which is determined by the alloca address space specified through the data layout. More... | |

| virtual MVT | getFenceOperandTy (const DataLayout &DL) const |

| Return the type for operands of fence. More... | |

| EVT | getShiftAmountTy (EVT LHSTy, const DataLayout &DL, bool LegalTypes=true) const |

| virtual MVT | getVectorIdxTy (const DataLayout &DL) const |

| Returns the type to be used for the index operand of: ISD::INSERT_VECTOR_ELT, ISD::EXTRACT_VECTOR_ELT, ISD::INSERT_SUBVECTOR, and ISD::EXTRACT_SUBVECTOR. More... | |

| virtual bool | isSelectSupported (SelectSupportKind) const |

| virtual bool | reduceSelectOfFPConstantLoads (bool IsFPSetCC) const |

| Return true if it is profitable to convert a select of FP constants into a constant pool load whose address depends on the select condition. More... | |

| bool | hasMultipleConditionRegisters () const |

| Return true if multiple condition registers are available. More... | |

| bool | hasExtractBitsInsn () const |

| Return true if the target has BitExtract instructions. More... | |

| virtual bool | shouldExpandBuildVectorWithShuffles (EVT, unsigned DefinedValues) const |

| virtual bool | hasStandaloneRem (EVT VT) const |

| Return true if the target can handle a standalone remainder operation. More... | |

| virtual bool | isFsqrtCheap (SDValue X, SelectionDAG &DAG) const |

| Return true if SQRT(X) shouldn't be replaced with X*RSQRT(X). More... | |

| int | getRecipEstimateSqrtEnabled (EVT VT, MachineFunction &MF) const |

| Return a ReciprocalEstimate enum value for a square root of the given type based on the function's attributes. More... | |

| int | getRecipEstimateDivEnabled (EVT VT, MachineFunction &MF) const |

| Return a ReciprocalEstimate enum value for a division of the given type based on the function's attributes. More... | |

| int | getSqrtRefinementSteps (EVT VT, MachineFunction &MF) const |

| Return the refinement step count for a square root of the given type based on the function's attributes. More... | |

| int | getDivRefinementSteps (EVT VT, MachineFunction &MF) const |

| Return the refinement step count for a division of the given type based on the function's attributes. More... | |

| bool | isSlowDivBypassed () const |

| Returns true if target has indicated at least one type should be bypassed. More... | |

| const DenseMap< unsigned int, unsigned int > & | getBypassSlowDivWidths () const |

| Returns map of slow types for division or remainder with corresponding fast types. More... | |

| bool | isJumpExpensive () const |

| Return true if Flow Control is an expensive operation that should be avoided. More... | |

| bool | isPredictableSelectExpensive () const |

| Return true if selects are only cheaper than branches if the branch is unlikely to be predicted right. More... | |

| virtual BranchProbability | getPredictableBranchThreshold () const |

| If a branch or a select condition is skewed in one direction by more than this factor, it is very likely to be predicted correctly. More... | |

| virtual bool | isLoadBitCastBeneficial (EVT LoadVT, EVT BitcastVT) const |

| Return true if the following transform is beneficial: fold (conv (load x)) -> (load (conv*)x) On architectures that don't natively support some vector loads efficiently, casting the load to a smaller vector of larger types and loading is more efficient, however, this can be undone by optimizations in dag combiner. More... | |

| virtual bool | isStoreBitCastBeneficial (EVT StoreVT, EVT BitcastVT) const |

| Return true if the following transform is beneficial: (store (y (conv x)), y*)) -> (store x, (x*)) More... | |

| virtual bool | storeOfVectorConstantIsCheap (EVT MemVT, unsigned NumElem, unsigned AddrSpace) const |

| Return true if it is expected to be cheaper to do a store of a non-zero vector constant with the given size and type for the address space than to store the individual scalar element constants. More... | |

| virtual bool | mergeStoresAfterLegalization () const |

| Allow store merging after legalization in addition to before legalization. More... | |

| virtual bool | isCtlzFast () const |

| Return true if ctlz instruction is fast. More... | |

| virtual bool | isMultiStoresCheaperThanBitsMerge (EVT LTy, EVT HTy) const |

| Return true if it is cheaper to split the store of a merged int val from a pair of smaller values into multiple stores. More... | |

| virtual bool | convertSetCCLogicToBitwiseLogic (EVT VT) const |

| Use bitwise logic to make pairs of compares more efficient. More... | |

| virtual MVT | hasFastEqualityCompare (unsigned NumBits) const |

| Return the preferred operand type if the target has a quick way to compare integer values of the given size. More... | |

| virtual bool | preferShiftsToClearExtremeBits (SDValue X) const |

| There are two ways to clear extreme bits (either low or high): Mask: x & (-1 << y) (the instcombine canonical form) Shifts: x >> y << y Return true if the variant with 2 shifts is preferred. More... | |

| bool | enableExtLdPromotion () const |

| Return true if the target wants to use the optimization that turns ext(promotableInst1(...(promotableInstN(load)))) into promotedInst1(...(promotedInstN(ext(load)))). More... | |

| virtual bool | canCombineStoreAndExtract (Type *VectorTy, Value *Idx, unsigned &Cost) const |

| Return true if the target can combine store(extractelement VectorTy, Idx). More... | |

| virtual bool | shouldSplatInsEltVarIndex (EVT) const |

| Return true if inserting a scalar into a variable element of an undef vector is more efficiently handled by splatting the scalar instead. More... | |

| bool | hasFloatingPointExceptions () const |

| Return true if target supports floating point exceptions. More... | |

| virtual MVT::SimpleValueType | getCmpLibcallReturnType () const |

| Return the ValueType for comparison libcalls. More... | |

| BooleanContent | getBooleanContents (bool isVec, bool isFloat) const |

| For targets without i1 registers, this gives the nature of the high-bits of boolean values held in types wider than i1. More... | |

| BooleanContent | getBooleanContents (EVT Type) const |

| Sched::Preference | getSchedulingPreference () const |

| Return target scheduling preference. More... | |

| virtual Sched::Preference | getSchedulingPreference (SDNode *) const |

| Some scheduler, e.g. More... | |

| virtual const TargetRegisterClass * | getRegClassFor (MVT VT) const |

| Return the register class that should be used for the specified value type. More... | |

| virtual const TargetRegisterClass * | getRepRegClassFor (MVT VT) const |

| Return the 'representative' register class for the specified value type. More... | |

| virtual uint8_t | getRepRegClassCostFor (MVT VT) const |

| Return the cost of the 'representative' register class for the specified value type. More... | |

| bool | isTypeLegal (EVT VT) const |

| Return true if the target has native support for the specified value type. More... | |

| const ValueTypeActionImpl & | getValueTypeActions () const |

| LegalizeTypeAction | getTypeAction (LLVMContext &Context, EVT VT) const |

| Return how we should legalize values of this type, either it is already legal (return 'Legal') or we need to promote it to a larger type (return 'Promote'), or we need to expand it into multiple registers of smaller integer type (return 'Expand'). More... | |

| LegalizeTypeAction | getTypeAction (MVT VT) const |

| EVT | getTypeToTransformTo (LLVMContext &Context, EVT VT) const |

| For types supported by the target, this is an identity function. More... | |

| EVT | getTypeToExpandTo (LLVMContext &Context, EVT VT) const |

| For types supported by the target, this is an identity function. More... | |

| unsigned | getVectorTypeBreakdown (LLVMContext &Context, EVT VT, EVT &IntermediateVT, unsigned &NumIntermediates, MVT &RegisterVT) const |

| Vector types are broken down into some number of legal first class types. More... | |

| virtual unsigned | getVectorTypeBreakdownForCallingConv (LLVMContext &Context, CallingConv::ID CC, EVT VT, EVT &IntermediateVT, unsigned &NumIntermediates, MVT &RegisterVT) const |

| Certain targets such as MIPS require that some types such as vectors are always broken down into scalars in some contexts. More... | |

| virtual bool | canOpTrap (unsigned Op, EVT VT) const |

| Returns true if the operation can trap for the value type. More... | |

| virtual bool | isVectorClearMaskLegal (ArrayRef< int >, EVT) const |

| Similar to isShuffleMaskLegal. More... | |

| LegalizeAction | getOperationAction (unsigned Op, EVT VT) const |

| Return how this operation should be treated: either it is legal, needs to be promoted to a larger size, needs to be expanded to some other code sequence, or the target has a custom expander for it. More... | |

| virtual bool | isSupportedFixedPointOperation (unsigned Op, EVT VT, unsigned Scale) const |

| Custom method defined by each target to indicate if an operation which may require a scale is supported natively by the target. More... | |

| LegalizeAction | getFixedPointOperationAction (unsigned Op, EVT VT, unsigned Scale) const |

| Some fixed point operations may be natively supported by the target but only for specific scales. More... | |

| LegalizeAction | getStrictFPOperationAction (unsigned Op, EVT VT) const |

| bool | isOperationLegalOrCustom (unsigned Op, EVT VT) const |

| Return true if the specified operation is legal on this target or can be made legal with custom lowering. More... | |

| bool | isOperationLegalOrPromote (unsigned Op, EVT VT) const |

| Return true if the specified operation is legal on this target or can be made legal using promotion. More... | |

| bool | isOperationLegalOrCustomOrPromote (unsigned Op, EVT VT) const |

| Return true if the specified operation is legal on this target or can be made legal with custom lowering or using promotion. More... | |

| bool | isOperationCustom (unsigned Op, EVT VT) const |

| Return true if the operation uses custom lowering, regardless of whether the type is legal or not. More... | |

| virtual bool | areJTsAllowed (const Function *Fn) const |

| Return true if lowering to a jump table is allowed. More... | |

| bool | rangeFitsInWord (const APInt &Low, const APInt &High, const DataLayout &DL) const |

| Check whether the range [Low,High] fits in a machine word. More... | |

| virtual bool | isSuitableForJumpTable (const SwitchInst *SI, uint64_t NumCases, uint64_t Range) const |

Return true if lowering to a jump table is suitable for a set of case clusters which may contain NumCases cases, Range range of values. More... | |

| bool | isSuitableForBitTests (unsigned NumDests, unsigned NumCmps, const APInt &Low, const APInt &High, const DataLayout &DL) const |

Return true if lowering to a bit test is suitable for a set of case clusters which contains NumDests unique destinations, Low and High as its lowest and highest case values, and expects NumCmps case value comparisons. More... | |

| bool | isOperationExpand (unsigned Op, EVT VT) const |

| Return true if the specified operation is illegal on this target or unlikely to be made legal with custom lowering. More... | |

| bool | isOperationLegal (unsigned Op, EVT VT) const |

| Return true if the specified operation is legal on this target. More... | |

| LegalizeAction | getLoadExtAction (unsigned ExtType, EVT ValVT, EVT MemVT) const |

| Return how this load with extension should be treated: either it is legal, needs to be promoted to a larger size, needs to be expanded to some other code sequence, or the target has a custom expander for it. More... | |

| bool | isLoadExtLegal (unsigned ExtType, EVT ValVT, EVT MemVT) const |

| Return true if the specified load with extension is legal on this target. More... | |

| bool | isLoadExtLegalOrCustom (unsigned ExtType, EVT ValVT, EVT MemVT) const |

| Return true if the specified load with extension is legal or custom on this target. More... | |

| LegalizeAction | getTruncStoreAction (EVT ValVT, EVT MemVT) const |

| Return how this store with truncation should be treated: either it is legal, needs to be promoted to a larger size, needs to be expanded to some other code sequence, or the target has a custom expander for it. More... | |

| bool | isTruncStoreLegal (EVT ValVT, EVT MemVT) const |

| Return true if the specified store with truncation is legal on this target. More... | |

| bool | isTruncStoreLegalOrCustom (EVT ValVT, EVT MemVT) const |

| Return true if the specified store with truncation has solution on this target. More... | |

| LegalizeAction | getIndexedLoadAction (unsigned IdxMode, MVT VT) const |

| Return how the indexed load should be treated: either it is legal, needs to be promoted to a larger size, needs to be expanded to some other code sequence, or the target has a custom expander for it. More... | |

| bool | isIndexedLoadLegal (unsigned IdxMode, EVT VT) const |

| Return true if the specified indexed load is legal on this target. More... | |

| LegalizeAction | getIndexedStoreAction (unsigned IdxMode, MVT VT) const |

| Return how the indexed store should be treated: either it is legal, needs to be promoted to a larger size, needs to be expanded to some other code sequence, or the target has a custom expander for it. More... | |

| bool | isIndexedStoreLegal (unsigned IdxMode, EVT VT) const |

| Return true if the specified indexed load is legal on this target. More... | |

| LegalizeAction | getCondCodeAction (ISD::CondCode CC, MVT VT) const |

| Return how the condition code should be treated: either it is legal, needs to be expanded to some other code sequence, or the target has a custom expander for it. More... | |

| bool | isCondCodeLegal (ISD::CondCode CC, MVT VT) const |

| Return true if the specified condition code is legal on this target. More... | |

| bool | isCondCodeLegalOrCustom (ISD::CondCode CC, MVT VT) const |

| Return true if the specified condition code is legal or custom on this target. More... | |

| MVT | getTypeToPromoteTo (unsigned Op, MVT VT) const |

| If the action for this operation is to promote, this method returns the ValueType to promote to. More... | |

| EVT | getValueType (const DataLayout &DL, Type *Ty, bool AllowUnknown=false) const |

| Return the EVT corresponding to this LLVM type. More... | |

| MVT | getSimpleValueType (const DataLayout &DL, Type *Ty, bool AllowUnknown=false) const |

| Return the MVT corresponding to this LLVM type. See getValueType. More... | |

| virtual unsigned | getByValTypeAlignment (Type *Ty, const DataLayout &DL) const |

| Return the desired alignment for ByVal or InAlloca aggregate function arguments in the caller parameter area. More... | |

| MVT | getRegisterType (MVT VT) const |

| Return the type of registers that this ValueType will eventually require. More... | |

| MVT | getRegisterType (LLVMContext &Context, EVT VT) const |

| Return the type of registers that this ValueType will eventually require. More... | |

| unsigned | getNumRegisters (LLVMContext &Context, EVT VT) const |

| Return the number of registers that this ValueType will eventually require. More... | |

| virtual MVT | getRegisterTypeForCallingConv (LLVMContext &Context, CallingConv::ID CC, EVT VT) const |

| Certain combinations of ABIs, Targets and features require that types are legal for some operations and not for other operations. More... | |

| virtual unsigned | getNumRegistersForCallingConv (LLVMContext &Context, CallingConv::ID CC, EVT VT) const |

| Certain targets require unusual breakdowns of certain types. More... | |

| virtual unsigned | getABIAlignmentForCallingConv (Type *ArgTy, DataLayout DL) const |

| Certain targets have context senstive alignment requirements, where one type has the alignment requirement of another type. More... | |

| virtual bool | ShouldShrinkFPConstant (EVT) const |

| If true, then instruction selection should seek to shrink the FP constant of the specified type to a smaller type in order to save space and / or reduce runtime. More... | |

| bool | hasBigEndianPartOrdering (EVT VT, const DataLayout &DL) const |

| When splitting a value of the specified type into parts, does the Lo or Hi part come first? This usually follows the endianness, except for ppcf128, where the Hi part always comes first. More... | |

| bool | hasTargetDAGCombine (ISD::NodeType NT) const |

| If true, the target has custom DAG combine transformations that it can perform for the specified node. More... | |

| unsigned | getGatherAllAliasesMaxDepth () const |

| unsigned | getMaxStoresPerMemset (bool OptSize) const |

| Get maximum # of store operations permitted for llvm.memset. More... | |

| unsigned | getMaxStoresPerMemcpy (bool OptSize) const |

| Get maximum # of store operations permitted for llvm.memcpy. More... | |

| virtual unsigned | getMaxGluedStoresPerMemcpy () const |

| Get maximum # of store operations to be glued together. More... | |

| unsigned | getMaxExpandSizeMemcmp (bool OptSize) const |

| Get maximum # of load operations permitted for memcmp. More... | |

| virtual unsigned | getMemcmpEqZeroLoadsPerBlock () const |

| For memcmp expansion when the memcmp result is only compared equal or not-equal to 0, allow up to this number of load pairs per block. More... | |

| unsigned | getMaxStoresPerMemmove (bool OptSize) const |

| Get maximum # of store operations permitted for llvm.memmove. More... | |

| bool | allowsMemoryAccess (LLVMContext &Context, const DataLayout &DL, EVT VT, unsigned AddrSpace=0, unsigned Alignment=1, bool *Fast=nullptr) const |

| Return true if the target supports a memory access of this type for the given address space and alignment. More... | |

| virtual bool | isSafeMemOpType (MVT) const |

| Returns true if it's safe to use load / store of the specified type to expand memcpy / memset inline. More... | |

| bool | usesUnderscoreSetJmp () const |

| Determine if we should use _setjmp or setjmp to implement llvm.setjmp. More... | |

| bool | usesUnderscoreLongJmp () const |

| Determine if we should use _longjmp or longjmp to implement llvm.longjmp. More... | |

| virtual unsigned | getMinimumJumpTableEntries () const |

| Return lower limit for number of blocks in a jump table. More... | |

| unsigned | getMinimumJumpTableDensity (bool OptForSize) const |

| Return lower limit of the density in a jump table. More... | |

| unsigned | getMaximumJumpTableSize () const |

| Return upper limit for number of entries in a jump table. More... | |

| virtual bool | isJumpTableRelative () const |

| unsigned | getStackPointerRegisterToSaveRestore () const |

| If a physical register, this specifies the register that llvm.savestack/llvm.restorestack should save and restore. More... | |

| unsigned | getJumpBufSize () const |

| Returns the target's jmp_buf size in bytes (if never set, the default is 200) More... | |

| unsigned | getJumpBufAlignment () const |

| Returns the target's jmp_buf alignment in bytes (if never set, the default is 0) More... | |

| unsigned | getMinStackArgumentAlignment () const |

| Return the minimum stack alignment of an argument. More... | |

| unsigned | getMinFunctionAlignment () const |

| Return the minimum function alignment. More... | |

| unsigned | getPrefFunctionAlignment () const |

| Return the preferred function alignment. More... | |

| virtual unsigned | getPrefLoopAlignment (MachineLoop *ML=nullptr) const |

| Return the preferred loop alignment. More... | |

| virtual bool | alignLoopsWithOptSize () const |

| Should loops be aligned even when the function is marked OptSize (but not MinSize). More... | |

| virtual bool | useStackGuardXorFP () const |

| If this function returns true, stack protection checks should XOR the frame pointer (or whichever pointer is used to address locals) into the stack guard value before checking it. More... | |

| virtual StringRef | getStackProbeSymbolName (MachineFunction &MF) const |

| Returns the name of the symbol used to emit stack probes or the empty string if not applicable. More... | |

| virtual bool | isCheapAddrSpaceCast (unsigned SrcAS, unsigned DestAS) const |

| Returns true if a cast from SrcAS to DestAS is "cheap", such that e.g. More... | |

| virtual bool | shouldAlignPointerArgs (CallInst *, unsigned &, unsigned &) const |

| Return true if the pointer arguments to CI should be aligned by aligning the object whose address is being passed. More... | |

| virtual bool | shouldSignExtendTypeInLibCall (EVT Type, bool IsSigned) const |

| Returns true if arguments should be sign-extended in lib calls. More... | |

| virtual LoadInst * | lowerIdempotentRMWIntoFencedLoad (AtomicRMWInst *RMWI) const |

| On some platforms, an AtomicRMW that never actually modifies the value (such as fetch_add of 0) can be turned into a fence followed by an atomic load. More... | |

| virtual ISD::NodeType | getExtendForAtomicOps () const |

| Returns how the platform's atomic operations are extended (ZERO_EXTEND, SIGN_EXTEND, or ANY_EXTEND). More... | |

| virtual bool | convertSelectOfConstantsToMath (EVT VT) const |

| Return true if a select of constants (select Cond, C1, C2) should be transformed into simple math ops with the condition value. More... | |

| virtual bool | decomposeMulByConstant (EVT VT, SDValue C) const |

| Return true if it is profitable to transform an integer multiplication-by-constant into simpler operations like shifts and adds. More... | |

| virtual bool | shouldUseStrictFP_TO_INT (EVT FpVT, EVT IntVT, bool IsSigned) const |

| Return true if it is more correct/profitable to use strict FP_TO_INT conversion operations - canonicalizing the FP source value instead of converting all cases and then selecting based on value. More... | |

| virtual bool | getAddrModeArguments (IntrinsicInst *, SmallVectorImpl< Value *> &, Type *&) const |

| CodeGenPrepare sinks address calculations into the same BB as Load/Store instructions reading the address. More... | |

| virtual bool | isLegalStoreImmediate (int64_t Value) const |

| Return true if the specified immediate is legal for the value input of a store instruction. More... | |

| virtual bool | isVectorShiftByScalarCheap (Type *Ty) const |

| Return true if it's significantly cheaper to shift a vector by a uniform scalar than by an amount which will vary across each lane. More... | |

| virtual bool | isCommutativeBinOp (unsigned Opcode) const |

| Returns true if the opcode is a commutative binary operation. More... | |

| virtual bool | allowTruncateForTailCall (Type *FromTy, Type *ToTy) const |

| Return true if a truncation from FromTy to ToTy is permitted when deciding whether a call is in tail position. More... | |

| bool | isExtFree (const Instruction *I) const |

Return true if the extension represented by I is free. More... | |

| bool | isExtLoad (const LoadInst *Load, const Instruction *Ext, const DataLayout &DL) const |

Return true if Load and Ext can form an ExtLoad. More... | |

| virtual bool | isSExtCheaperThanZExt (EVT FromTy, EVT ToTy) const |

| Return true if sign-extension from FromTy to ToTy is cheaper than zero-extension. More... | |

| virtual bool | hasVectorBlend () const |

| Return true if the target has a vector blend instruction. More... | |

| virtual bool | isFPExtFree (EVT DestVT, EVT SrcVT) const |

| Return true if an fpext operation is free (for instance, because single-precision floating-point numbers are implicitly extended to double-precision). More... | |

| virtual bool | isFPExtFoldable (unsigned Opcode, EVT DestVT, EVT SrcVT) const |

Return true if an fpext operation input to an Opcode operation is free (for instance, because half-precision floating-point numbers are implicitly extended to float-precision) for an FMA instruction. More... | |

| virtual bool | isVectorLoadExtDesirable (SDValue ExtVal) const |

| Return true if folding a vector load into ExtVal (a sign, zero, or any extend node) is profitable. More... | |

| virtual bool | isFNegFree (EVT VT) const |

| Return true if an fneg operation is free to the point where it is never worthwhile to replace it with a bitwise operation. More... | |

| virtual bool | isFAbsFree (EVT VT) const |

| Return true if an fabs operation is free to the point where it is never worthwhile to replace it with a bitwise operation. More... | |

| virtual bool | isNarrowingProfitable (EVT, EVT) const |

| Return true if it's profitable to narrow operations of type VT1 to VT2. More... | |

| virtual bool | shouldScalarizeBinop (SDValue VecOp) const |

| Try to convert an extract element of a vector binary operation into an extract element followed by a scalar operation. More... | |

| virtual bool | aggressivelyPreferBuildVectorSources (EVT VecVT) const |

| void | setLibcallName (RTLIB::Libcall Call, const char *Name) |

| Rename the default libcall routine name for the specified libcall. More... | |

| const char * | getLibcallName (RTLIB::Libcall Call) const |

| Get the libcall routine name for the specified libcall. More... | |

| void | setCmpLibcallCC (RTLIB::Libcall Call, ISD::CondCode CC) |

| Override the default CondCode to be used to test the result of the comparison libcall against zero. More... | |

| ISD::CondCode | getCmpLibcallCC (RTLIB::Libcall Call) const |

| Get the CondCode that's to be used to test the result of the comparison libcall against zero. More... | |

| void | setLibcallCallingConv (RTLIB::Libcall Call, CallingConv::ID CC) |

| Set the CallingConv that should be used for the specified libcall. More... | |

| CallingConv::ID | getLibcallCallingConv (RTLIB::Libcall Call) const |

| Get the CallingConv that should be used for the specified libcall. More... | |

| int | InstructionOpcodeToISD (unsigned Opcode) const |

| Get the ISD node that corresponds to the Instruction class opcode. More... | |

| std::pair< int, MVT > | getTypeLegalizationCost (const DataLayout &DL, Type *Ty) const |

| Estimate the cost of type-legalization and the legalized type. More... | |

| unsigned | getMaxAtomicSizeInBitsSupported () const |

| Returns the maximum atomic operation size (in bits) supported by the backend. More... | |

| unsigned | getMinCmpXchgSizeInBits () const |

| Returns the size of the smallest cmpxchg or ll/sc instruction the backend supports. More... | |

| bool | supportsUnalignedAtomics () const |

| Whether the target supports unaligned atomic operations. More... | |

| virtual bool | shouldInsertFencesForAtomic (const Instruction *I) const |

| Whether AtomicExpandPass should automatically insert fences and reduce ordering for this atomic. More... | |

| virtual Value * | emitMaskedAtomicRMWIntrinsic (IRBuilder<> &Builder, AtomicRMWInst *AI, Value *AlignedAddr, Value *Incr, Value *Mask, Value *ShiftAmt, AtomicOrdering Ord) const |

| Perform a masked atomicrmw using a target-specific intrinsic. More... | |

| virtual Value * | emitMaskedAtomicCmpXchgIntrinsic (IRBuilder<> &Builder, AtomicCmpXchgInst *CI, Value *AlignedAddr, Value *CmpVal, Value *NewVal, Value *Mask, AtomicOrdering Ord) const |

| Perform a masked cmpxchg using a target-specific intrinsic. More... | |

| virtual Instruction * | emitLeadingFence (IRBuilder<> &Builder, Instruction *Inst, AtomicOrdering Ord) const |

| Inserts in the IR a target-specific intrinsic specifying a fence. More... | |

| virtual Instruction * | emitTrailingFence (IRBuilder<> &Builder, Instruction *Inst, AtomicOrdering Ord) const |

Additional Inherited Members | |

Public Types inherited from llvm::TargetLowering Public Types inherited from llvm::TargetLowering | |

| enum | ConstraintType { C_Register, C_RegisterClass, C_Memory, C_Other, C_Unknown } |

| enum | ConstraintWeight { CW_Invalid = -1, CW_Okay = 0, CW_Good = 1, CW_Better = 2, CW_Best = 3, CW_SpecificReg = CW_Okay, CW_Register = CW_Good, CW_Memory = CW_Better, CW_Constant = CW_Best, CW_Default = CW_Okay } |

| using | AsmOperandInfoVector = std::vector< AsmOperandInfo > |

Public Types inherited from llvm::TargetLoweringBase Public Types inherited from llvm::TargetLoweringBase | |

| enum | LegalizeAction : uint8_t { Legal, Promote, Expand, LibCall, Custom } |

| This enum indicates whether operations are valid for a target, and if not, what action should be used to make them valid. More... | |

| enum | LegalizeTypeAction : uint8_t { TypeLegal, TypePromoteInteger, TypeExpandInteger, TypeSoftenFloat, TypeExpandFloat, TypeScalarizeVector, TypeSplitVector, TypeWidenVector, TypePromoteFloat } |

| This enum indicates whether a types are legal for a target, and if not, what action should be used to make them valid. More... | |

| enum | BooleanContent { UndefinedBooleanContent, ZeroOrOneBooleanContent, ZeroOrNegativeOneBooleanContent } |

| Enum that describes how the target represents true/false values. More... | |

| enum | SelectSupportKind { ScalarValSelect, ScalarCondVectorVal, VectorMaskSelect } |

| Enum that describes what type of support for selects the target has. More... | |

| enum | AtomicExpansionKind { AtomicExpansionKind::None, AtomicExpansionKind::LLSC, AtomicExpansionKind::LLOnly, AtomicExpansionKind::CmpXChg, AtomicExpansionKind::MaskedIntrinsic } |

| Enum that specifies what an atomic load/AtomicRMWInst is expanded to, if at all. More... | |

| enum | MulExpansionKind { MulExpansionKind::Always, MulExpansionKind::OnlyLegalOrCustom } |

| Enum that specifies when a multiplication should be expanded. More... | |

| enum | ReciprocalEstimate : int { Unspecified = -1, Disabled = 0, Enabled = 1 } |

| Reciprocal estimate status values used by the functions below. More... | |

| using | LegalizeKind = std::pair< LegalizeTypeAction, EVT > |

| LegalizeKind holds the legalization kind that needs to happen to EVT in order to type-legalize it. More... | |

| using | ArgListTy = std::vector< ArgListEntry > |

Static Public Member Functions inherited from llvm::TargetLoweringBase Static Public Member Functions inherited from llvm::TargetLoweringBase | |

| static ISD::NodeType | getExtendForContent (BooleanContent Content) |

Protected Member Functions inherited from llvm::TargetLoweringBase Protected Member Functions inherited from llvm::TargetLoweringBase | |

| void | initActions () |

| Initialize all of the actions to default values. More... | |

| Value * | getDefaultSafeStackPointerLocation (IRBuilder<> &IRB, bool UseTLS) const |

| void | setBooleanContents (BooleanContent Ty) |

| Specify how the target extends the result of integer and floating point boolean values from i1 to a wider type. More... | |

| void | setBooleanContents (BooleanContent IntTy, BooleanContent FloatTy) |

| Specify how the target extends the result of integer and floating point boolean values from i1 to a wider type. More... | |

| void | setBooleanVectorContents (BooleanContent Ty) |

| Specify how the target extends the result of a vector boolean value from a vector of i1 to a wider type. More... | |

| void | setSchedulingPreference (Sched::Preference Pref) |

| Specify the target scheduling preference. More... | |

| void | setUseUnderscoreSetJmp (bool Val) |

| Indicate whether this target prefers to use _setjmp to implement llvm.setjmp or the version without _. More... | |

| void | setUseUnderscoreLongJmp (bool Val) |

| Indicate whether this target prefers to use _longjmp to implement llvm.longjmp or the version without _. More... | |

| void | setMinimumJumpTableEntries (unsigned Val) |

| Indicate the minimum number of blocks to generate jump tables. More... | |

| void | setMaximumJumpTableSize (unsigned) |

| Indicate the maximum number of entries in jump tables. More... | |

| void | setStackPointerRegisterToSaveRestore (unsigned R) |

| If set to a physical register, this specifies the register that llvm.savestack/llvm.restorestack should save and restore. More... | |

| void | setHasMultipleConditionRegisters (bool hasManyRegs=true) |

| Tells the code generator that the target has multiple (allocatable) condition registers that can be used to store the results of comparisons for use by selects and conditional branches. More... | |

| void | setHasExtractBitsInsn (bool hasExtractInsn=true) |

| Tells the code generator that the target has BitExtract instructions. More... | |

| void | setJumpIsExpensive (bool isExpensive=true) |

| Tells the code generator not to expand logic operations on comparison predicates into separate sequences that increase the amount of flow control. More... | |

| void | setHasFloatingPointExceptions (bool FPExceptions=true) |

| Tells the code generator that this target supports floating point exceptions and cares about preserving floating point exception behavior. More... | |

| void | addBypassSlowDiv (unsigned int SlowBitWidth, unsigned int FastBitWidth) |

| Tells the code generator which bitwidths to bypass. More... | |

| void | addRegisterClass (MVT VT, const TargetRegisterClass *RC) |

| Add the specified register class as an available regclass for the specified value type. More... | |

| virtual std::pair< const TargetRegisterClass *, uint8_t > | findRepresentativeClass (const TargetRegisterInfo *TRI, MVT VT) const |

| Return the largest legal super-reg register class of the register class for the specified type and its associated "cost". More... | |

| void | computeRegisterProperties (const TargetRegisterInfo *TRI) |

| Once all of the register classes are added, this allows us to compute derived properties we expose. More... | |

| void | setOperationAction (unsigned Op, MVT VT, LegalizeAction Action) |

| Indicate that the specified operation does not work with the specified type and indicate what to do about it. More... | |

| void | setLoadExtAction (unsigned ExtType, MVT ValVT, MVT MemVT, LegalizeAction Action) |

| Indicate that the specified load with extension does not work with the specified type and indicate what to do about it. More... | |

| void | setTruncStoreAction (MVT ValVT, MVT MemVT, LegalizeAction Action) |

| Indicate that the specified truncating store does not work with the specified type and indicate what to do about it. More... | |

| void | setIndexedLoadAction (unsigned IdxMode, MVT VT, LegalizeAction Action) |

| Indicate that the specified indexed load does or does not work with the specified type and indicate what to do abort it. More... | |

| void | setIndexedStoreAction (unsigned IdxMode, MVT VT, LegalizeAction Action) |

| Indicate that the specified indexed store does or does not work with the specified type and indicate what to do about it. More... | |

| void | setCondCodeAction (ISD::CondCode CC, MVT VT, LegalizeAction Action) |

| Indicate that the specified condition code is or isn't supported on the target and indicate what to do about it. More... | |

| void | AddPromotedToType (unsigned Opc, MVT OrigVT, MVT DestVT) |

| If Opc/OrigVT is specified as being promoted, the promotion code defaults to trying a larger integer/fp until it can find one that works. More... | |

| void | setOperationPromotedToType (unsigned Opc, MVT OrigVT, MVT DestVT) |

| Convenience method to set an operation to Promote and specify the type in a single call. More... | |

| void | setTargetDAGCombine (ISD::NodeType NT) |

| Targets should invoke this method for each target independent node that they want to provide a custom DAG combiner for by implementing the PerformDAGCombine virtual method. More... | |

| void | setJumpBufSize (unsigned Size) |

| Set the target's required jmp_buf buffer size (in bytes); default is 200. More... | |

| void | setJumpBufAlignment (unsigned Align) |

| Set the target's required jmp_buf buffer alignment (in bytes); default is 0. More... | |

| void | setMinFunctionAlignment (unsigned Align) |

| Set the target's minimum function alignment (in log2(bytes)) More... | |

| void | setPrefFunctionAlignment (unsigned Align) |

| Set the target's preferred function alignment. More... | |

| void | setPrefLoopAlignment (unsigned Align) |

| Set the target's preferred loop alignment. More... | |

| void | setMinStackArgumentAlignment (unsigned Align) |

| Set the minimum stack alignment of an argument (in log2(bytes)). More... | |

| void | setMaxAtomicSizeInBitsSupported (unsigned SizeInBits) |

| Set the maximum atomic operation size supported by the backend. More... | |

| void | setMinCmpXchgSizeInBits (unsigned SizeInBits) |

| Sets the minimum cmpxchg or ll/sc size supported by the backend. More... | |

| void | setSupportsUnalignedAtomics (bool UnalignedSupported) |

| Sets whether unaligned atomic operations are supported. More... | |

| bool | isLegalRC (const TargetRegisterInfo &TRI, const TargetRegisterClass &RC) const |

| Return true if the value types that can be represented by the specified register class are all legal. More... | |

| MachineBasicBlock * | emitPatchPoint (MachineInstr &MI, MachineBasicBlock *MBB) const |

| Replace/modify any TargetFrameIndex operands with a targte-dependent sequence of memory operands that is recognized by PrologEpilogInserter. More... | |

| MachineBasicBlock * | emitXRayCustomEvent (MachineInstr &MI, MachineBasicBlock *MBB) const |

| Replace/modify the XRay custom event operands with target-dependent details. More... | |

| MachineBasicBlock * | emitXRayTypedEvent (MachineInstr &MI, MachineBasicBlock *MBB) const |

| Replace/modify the XRay typed event operands with target-dependent details. More... | |

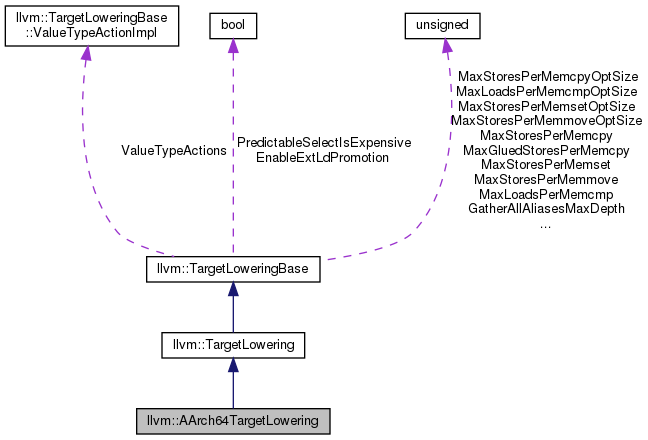

Protected Attributes inherited from llvm::TargetLoweringBase Protected Attributes inherited from llvm::TargetLoweringBase | |

| ValueTypeActionImpl | ValueTypeActions |

| unsigned | GatherAllAliasesMaxDepth |

| Depth that GatherAllAliases should should continue looking for chain dependencies when trying to find a more preferable chain. More... | |

| unsigned | MaxStoresPerMemset |

| Specify maximum number of store instructions per memset call. More... | |

| unsigned | MaxStoresPerMemsetOptSize |

| Maximum number of stores operations that may be substituted for the call to memset, used for functions with OptSize attribute. More... | |

| unsigned | MaxStoresPerMemcpy |

| Specify maximum bytes of store instructions per memcpy call. More... | |

| unsigned | MaxGluedStoresPerMemcpy = 0 |

| Specify max number of store instructions to glue in inlined memcpy. More... | |

| unsigned | MaxStoresPerMemcpyOptSize |

| Maximum number of store operations that may be substituted for a call to memcpy, used for functions with OptSize attribute. More... | |

| unsigned | MaxLoadsPerMemcmp |

| unsigned | MaxLoadsPerMemcmpOptSize |

| unsigned | MaxStoresPerMemmove |

| Specify maximum bytes of store instructions per memmove call. More... | |

| unsigned | MaxStoresPerMemmoveOptSize |

| Maximum number of store instructions that may be substituted for a call to memmove, used for functions with OptSize attribute. More... | |

| bool | PredictableSelectIsExpensive |

| Tells the code generator that select is more expensive than a branch if the branch is usually predicted right. More... | |

| bool | EnableExtLdPromotion |

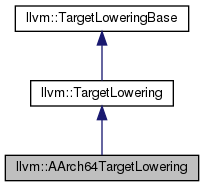

Detailed Description

Definition at line 241 of file AArch64ISelLowering.h.

Constructor & Destructor Documentation

◆ AArch64TargetLowering()

|

explicit |

Definition at line 119 of file AArch64ISelLowering.cpp.

References llvm::ISD::ABS, llvm::ISD::ADD, llvm::ISD::ADDC, llvm::ISD::ADDE, llvm::TargetLoweringBase::AddPromotedToType(), llvm::TargetLoweringBase::addRegisterClass(), llvm::MVT::all_valuetypes(), llvm::ISD::AND, llvm::ISD::ANY_EXTEND, assert(), llvm::ISD::ATOMIC_CMP_SWAP, llvm::ISD::ATOMIC_LOAD_AND, llvm::ISD::ATOMIC_LOAD_SUB, llvm::ISD::BITCAST, llvm::ISD::BITREVERSE, llvm::ISD::BlockAddress, llvm::ISD::BR_CC, llvm::ISD::BR_JT, llvm::ISD::BRCOND, llvm::ISD::BSWAP, llvm::ISD::BUILD_PAIR, llvm::ISD::BUILD_VECTOR, llvm::EVT::changeVectorElementTypeToInteger(), llvm::TargetLoweringBase::computeRegisterProperties(), llvm::ISD::CONCAT_VECTORS, llvm::ISD::ConstantFP, llvm::ISD::ConstantPool, llvm::ISD::CTLZ, llvm::ISD::CTPOP, llvm::ISD::CTTZ, llvm::TargetLoweringBase::Custom, llvm::ISD::DYNAMIC_STACKALLOC, llvm::TargetLoweringBase::EnableExtLdPromotion, llvm::TargetLoweringBase::Expand, llvm::ISD::EXTLOAD, llvm::ISD::EXTRACT_SUBVECTOR, llvm::ISD::EXTRACT_VECTOR_ELT, llvm::MVT::f128, llvm::MVT::f16, llvm::MVT::f32, llvm::MVT::f64, llvm::MVT::f80, llvm::ISD::FABS, llvm::ISD::FADD, llvm::ISD::FCEIL, llvm::ISD::FCOPYSIGN, llvm::ISD::FCOS, llvm::ISD::FDIV, llvm::ISD::FEXP, llvm::ISD::FEXP2, llvm::ISD::FFLOOR, llvm::ISD::FLOG, llvm::ISD::FLOG10, llvm::ISD::FLOG2, llvm::ISD::FLT_ROUNDS_, llvm::ISD::FMA, llvm::ISD::FMAXIMUM, llvm::ISD::FMAXNUM, llvm::ISD::FMINIMUM, llvm::ISD::FMINNUM, llvm::ISD::FMUL, llvm::ISD::FNEARBYINT, llvm::ISD::FNEG, llvm::ISD::FP_EXTEND, llvm::ISD::FP_ROUND, llvm::ISD::FP_TO_SINT, llvm::ISD::FP_TO_UINT, llvm::MVT::fp_valuetypes(), llvm::ISD::FPOW, llvm::ISD::FPOWI, llvm::ISD::FREM, llvm::ISD::FRINT, llvm::ISD::FROUND, llvm::ISD::FSIN, llvm::ISD::FSINCOS, llvm::ISD::FSQRT, llvm::ISD::FSUB, llvm::ISD::FTRUNC, llvm::TargetMachine::getCodeModel(), llvm::TargetLoweringBase::getLibcallName(), llvm::AArch64Subtarget::getMaximumJumpTableSize(), llvm::TargetLoweringBase::getMaximumJumpTableSize(), llvm::AArch64Subtarget::getPrefFunctionAlignment(), llvm::AArch64Subtarget::getPrefLoopAlignment(), llvm::AArch64Subtarget::getRegisterInfo(), llvm::EVT::getSimpleVT(), llvm::MVT::getVectorElementType(), llvm::ISD::GlobalAddress, llvm::ISD::GlobalTLSAddress, llvm::AArch64Subtarget::hasFPARMv8(), llvm::AArch64Subtarget::hasFullFP16(), llvm::AArch64Subtarget::hasNEON(), llvm::AArch64Subtarget::hasPerfMon(), llvm::Sched::Hybrid, llvm::MVT::i1, llvm::MVT::i128, llvm::MVT::i16, llvm::MVT::i32, llvm::MVT::i64, llvm::MVT::i8, im, llvm::ISD::INSERT_VECTOR_ELT, llvm::MVT::integer_valuetypes(), llvm::ISD::INTRINSIC_VOID, llvm::ISD::INTRINSIC_W_CHAIN, llvm::ISD::INTRINSIC_WO_CHAIN, llvm::MVT::isFloatingPoint(), llvm::AArch64Subtarget::isLittleEndian(), llvm::AArch64Subtarget::isTargetMachO(), llvm::AArch64Subtarget::isTargetWindows(), llvm::MVT::isVector(), llvm::ISD::JumpTable, llvm::CodeModel::Large, llvm::ISD::LAST_INDEXED_MODE, llvm::TargetLoweringBase::Legal, llvm::ISD::LOAD, llvm::TargetLoweringBase::MaxGluedStoresPerMemcpy, llvm::TargetLoweringBase::MaxStoresPerMemcpy, llvm::TargetLoweringBase::MaxStoresPerMemcpyOptSize, llvm::TargetLoweringBase::MaxStoresPerMemmove, llvm::TargetLoweringBase::MaxStoresPerMemmoveOptSize, llvm::TargetLoweringBase::MaxStoresPerMemset, llvm::TargetLoweringBase::MaxStoresPerMemsetOptSize, llvm::ISD::MUL, llvm::ISD::MULHS, llvm::ISD::MULHU, llvm::ISD::OR, llvm::MVT::Other, llvm::ISD::PRE_INC, llvm::AArch64Subtarget::predictableSelectIsExpensive(), llvm::TargetLoweringBase::PredictableSelectIsExpensive, llvm::ISD::PREFETCH, llvm::TargetLoweringBase::Promote, llvm::ISD::READCYCLECOUNTER, llvm::AArch64Subtarget::requiresStrictAlign(), llvm::ISD::ROTL, llvm::ISD::ROTR, llvm::ISD::SADDO, llvm::ISD::SDIV, llvm::ISD::SDIVREM, llvm::ISD::SELECT, llvm::ISD::SELECT_CC, llvm::TargetLoweringBase::setBooleanContents(), llvm::TargetLoweringBase::setBooleanVectorContents(), llvm::ISD::SETCC, llvm::TargetLoweringBase::setHasExtractBitsInsn(), llvm::TargetLoweringBase::setIndexedLoadAction(), llvm::TargetLoweringBase::setIndexedStoreAction(), llvm::TargetLoweringBase::setLoadExtAction(), llvm::TargetLoweringBase::setMaximumJumpTableSize(), llvm::TargetLoweringBase::setMinFunctionAlignment(), llvm::TargetLoweringBase::setOperationAction(), llvm::TargetLoweringBase::setOperationPromotedToType(), llvm::TargetLoweringBase::setPrefFunctionAlignment(), llvm::TargetLoweringBase::setPrefLoopAlignment(), llvm::TargetLoweringBase::setSchedulingPreference(), llvm::TargetLoweringBase::setStackPointerRegisterToSaveRestore(), llvm::TargetLoweringBase::setTargetDAGCombine(), llvm::TargetLoweringBase::setTruncStoreAction(), llvm::ISD::SEXTLOAD, llvm::ISD::SHL, llvm::ISD::SHL_PARTS, llvm::ISD::SIGN_EXTEND, llvm::ISD::SIGN_EXTEND_INREG, llvm::ISD::SINT_TO_FP, llvm::ISD::SMAX, llvm::ISD::SMIN, llvm::ISD::SMUL_LOHI, llvm::ISD::SMULO, llvm::ISD::SRA, llvm::ISD::SRA_PARTS, llvm::ISD::SREM, llvm::ISD::SRL, llvm::ISD::SRL_PARTS, llvm::ISD::SSUBO, llvm::ISD::STACKRESTORE, llvm::ISD::STACKSAVE, llvm::ISD::STORE, llvm::ISD::SUB, llvm::ISD::SUBC, llvm::ISD::SUBE, llvm::AArch64Subtarget::supportsAddressTopByteIgnored(), llvm::ISD::TRAP, llvm::ISD::UADDO, llvm::ISD::UDIV, llvm::ISD::UDIVREM, llvm::ISD::UINT_TO_FP, llvm::ISD::UMAX, llvm::ISD::UMIN, llvm::ISD::UMUL_LOHI, llvm::ISD::UMULO, llvm::ISD::UREM, llvm::ISD::USUBO, llvm::MVT::v16i8, llvm::MVT::v1f64, llvm::MVT::v1i64, llvm::MVT::v2f32, llvm::MVT::v2f64, llvm::MVT::v2i16, llvm::MVT::v2i32, llvm::MVT::v2i64, llvm::MVT::v2i8, llvm::MVT::v4f16, llvm::MVT::v4f32, llvm::MVT::v4i16, llvm::MVT::v4i32, llvm::MVT::v4i8, llvm::MVT::v8f16, llvm::MVT::v8i16, llvm::MVT::v8i32, llvm::MVT::v8i8, llvm::ISD::VAARG, llvm::ISD::VACOPY, llvm::ISD::VAEND, llvm::ISD::VASTART, llvm::ISD::VECREDUCE_ADD, llvm::ISD::VECREDUCE_FMAX, llvm::ISD::VECREDUCE_FMIN, llvm::ISD::VECREDUCE_SMAX, llvm::ISD::VECREDUCE_SMIN, llvm::ISD::VECREDUCE_UMAX, llvm::ISD::VECREDUCE_UMIN, llvm::ISD::VECTOR_SHUFFLE, llvm::MVT::vector_valuetypes(), llvm::ISD::VSELECT, llvm::ISD::XOR, llvm::ISD::ZERO_EXTEND, llvm::TargetLoweringBase::ZeroOrNegativeOneBooleanContent, llvm::TargetLoweringBase::ZeroOrOneBooleanContent, and llvm::ISD::ZEXTLOAD.

Member Function Documentation

◆ allowsMisalignedMemoryAccesses()

|

overridevirtual |

Returns true if the target allows unaligned memory accesses of the specified type.

Reimplemented from llvm::TargetLoweringBase.

Definition at line 1055 of file AArch64ISelLowering.cpp.

References llvm::EVT::getStoreSize(), llvm::AArch64Subtarget::isMisaligned128StoreSlow(), llvm::AArch64Subtarget::requiresStrictAlign(), and llvm::MVT::v2i64.

Referenced by getOptimalMemOpType(), and LowerTruncateVectorStore().

◆ canMergeStoresTo()

|

inlineoverridevirtual |

Returns if it's reasonable to merge stores to MemVT size.

Reimplemented from llvm::TargetLoweringBase.

Definition at line 436 of file AArch64ISelLowering.h.

References llvm::MachineFunction::getFunction(), llvm::SelectionDAG::getMachineFunction(), llvm::EVT::getSizeInBits(), llvm::Function::hasFnAttribute(), and llvm::Attribute::NoImplicitFloat.

◆ CCAssignFnForCall()

| CCAssignFn * AArch64TargetLowering::CCAssignFnForCall | ( | CallingConv::ID | CC, |

| bool | IsVarArg | ||

| ) | const |

Selects the correct CCAssignFn for a given CallingConvention value.

Definition at line 2979 of file AArch64ISelLowering.cpp.